Beyond RCTs: How Rapid-Fire Testing Can Build Better Financial Products

If a mobile money provider offered a bonus on peer-to-peer transfers, would that be enough to generate new business?

If the provider sent weekly messages reminding mobile account holders to actively use branchless banking services, would that significantly change their behavior?

For the past three years, Innovations for Poverty Action (IPA) has been working closely with Pakistan’s leading mobile money provider, Telenor Easypaisa, to explore answers to such questions and increase adoption and usage of the Easypaisa mobile money platform among the country’s unbanked poor. Through advanced marketing campaigns, we have implemented several experiments with Easypaisa, such as varying SMS content based on types and amount of incentives and targeted referral programs, and evaluating the most promising ways to increase active and sustained use of mobile money. Recently, the partnership also employed network analysis to better target and match existing customers with friends and family – in essence building the type of social network that is crucial to any technology adoption.

Due to several factors—troves of existing data, a massive user base, modest adjustments to the client experience, and a drive for results within a business cycle time frame—this partnership resembles few others in IPA’s portfolio. Yet this study and others like it represent a new path in experiments that help the world’s poor: rapid-fire testing.

Nimble RCTs

Also known as A/B tests or split-tests, rapid-fire tests (RFTs) are nimble randomized controlled trials (RCTs) specifically aimed at improving product design. They are already common practice among technology-based companies as a way to iterate and rapidly improve their product, as well as expand their user-base. A simple example of an A/B test is to display two different landing pages to visitors in order to track which version of the page (A or B) gives a higher conversion rate. Crucially, which page to display to each user would be randomly assigned according to IP address, client segment or other criteria.

But rapid-fire RCTs are being applied to more than just websites. In fact, they can be used to answer a number of questions about the demand for financial products. But before delving into these fascinating studies, it’s helpful to know what they can and cannot do.

What Do We Mean by Rapid-Fire Testing?

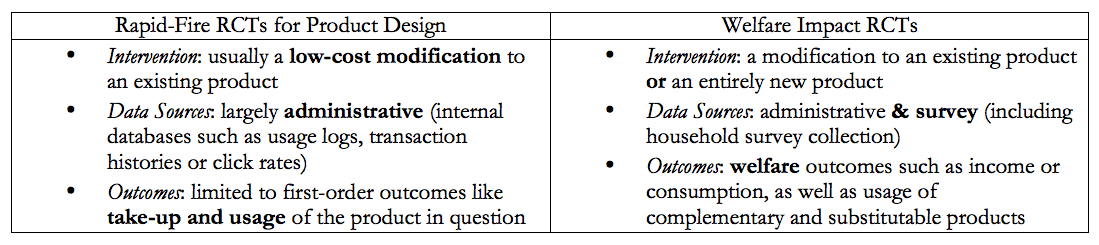

Rapid-fire tests can be categorized as RCTs because the core of their design relies on measuring client outcomes through randomly-assigned variations in a product or experience. But there are other characteristics that set a rapid-fire test apart from other types of RCTs that IPA runs:

To reiterate, both of these study types are RCTs because each is an experiment which deploys an intervention to a randomly assigned treatment group. And rapid-fire tests engender just as much confidence in the causality of their results as would any RCT.

Pros and Cons

The crucial differences listed above give rise to advantages and limitations of a rapid-fire test. Advantages include:

- Bigger datasets: Study designs can accommodate very large samples by using low-cost, scalable interventions and administrative datasets. For example, it can be easier to analyze historical transaction data than to design, conduct and analyze time-consuming household surveys.

- Cheaper implementation: Rapid fire tests rely on easily-scalable interventions, often powered by high-tech platforms, which lower the costs of implementation. For example, the cost of sending different types of marketing messages is very low compared to developing and implementing a new insurance product.

- Faster results: By focusing on short-term outcomes such as take-up and usage, rapid-fire tests are designed to provide faster results than traditional RCTs, whose welfare outcomes take longer to manifest, measure and analyze.

RFTs are not without their limitations, however. For instance:

- They can be atheoretical: Because RFTs are so easy to deploy, it can be easy to run thousands of tests over every observable characteristic, devoid of theory. This is often the case in the tech world. For example, which theory would predict that these website buttons had wildly different performances? Without a grounded theory of change, the research cannot address broader questions about human behavior, which is why IPA’s studies, whether looking at product design or welfare impacts, are always accompanied by well-founded theories of change.

- Small effect sizes: Because RFTs rely on incremental change to existing products or services, researchers usually expect the marginal impact to be small (but cost-effective).

- Limited outcomes measurement: By using administrative data, we limit our ability to measure outcomes to those that are captured by institutional records-for example, whether an account was opened or how often a user transacted. Broader statements about client welfare rely on theory or prior evidence such as, for example, that increased savings balances lead to improved wellbeing.

- They require large samples: Small anticipated effects mean that large sample sizes are usually necessary to detect an impact. If the product you would like to test doesn’t yet have thousands of users, it’s better to wait and focus instead on increasing take-up rates.

Even so, primarily because RFTs can be run on larger samples and generate rapid results at low cost, they seem destined to play a key role in experimentation within financial institutions.

IPA/B Testing

The Financial Inclusion Program at IPA is partnering with leading financial service providers to run a number of rapid-fire studies to better understand client preferences towards financial product features and design variations. By leveraging our academic network and low-cost data collection technologies such as Interactive Voice Response (IVR) calling, these rapid-fire tests are both grounded in theory and supplemented by additional, low-cost data collection.

For example, the study with Telenor Easypaisa, described above, utilizes a combination of low-cost SMS, IVRs and call center surveys for a 1 million+ sample of subscribers to add additional data points to an already robust database. The survey questions focus on user experience and likelihood to refer other clients, which allows researchers to measure reported behavior in addition to observed behavior through transaction patterns.

Another interesting example is a series of tests of SMS messaging campaigns with financial institutions around the world to identify the content characteristics, such as personalization or action-oriented frames that are most effective in encouraging clients to save more or pay down debt. This effort builds on an initial study in the Philippines, Peru, and Bolivia, that found that the right messages could raise savings balances by as much as 16 percent. Credit-focused studies in Uganda and the Philippines found that SMS reminders to repay loans on time can be as effective as more costly incentives like reducing the interest rate. Parallel work in the United States evaluates the effect of various text message reminders on the outcomes of clients struggling to repay their debt, in essence setting-up A/B tests to determine the most effective way to drive product adoption. Many other studies are incorporating messaging as low-cost side treatments as well.

Other similar rapid-fire experiments have studied the impacts of varying messaging contents on customers. Four recent evaluations of messaging studies are:

- Altering e-mail content to test the impact of emails on retirement savings choices among employees in the United States

- Tweaking advertising content to see the impact of loan prices and deadlines on loan demand from South African consumers

- Changing SMS content to observe account holders’ demand for bank overdrafts in Turkey

- Adjusting loan offers to determine demand for sharia-compliant credit products in Jordan

A Powerful Methodology in the Product Design Toolbox

Despite their potential limitations, rapid-fire experiments have enormous potential benefits. We understand that many organizations are concerned about the cost of a multi-year, survey-driven RCT. If that is the case, consider a rapid-fire experiment in addition to any low-cost data collection. Having a rigorous experimental framework will significantly augment the quality of your findings.

In March 2016, IPA and the Center for Effective Global Action(CEGA) launched the Goldilocks Toolkit, a resource designed for organizations that want to improve their evaluation learning but aren’t candidates for a full-scale RCT. The toolkit includes rapid-fire testing as one of many methodologies organizations can adopt if the conditions are right.

By combining IPA’s expertise in behavioral economics and RCT implementation with the business acumen of the partners we work with, rapid-fire tests can add rigorous evidence to the product innovation process. Through leveraging administrative data and scalable technologies, we see rapid-fire testing as an exciting way to evaluate and improve financial services for the poor, accelerating the learning curve and serving as an important complement to other methodologies.

Photo credit: Innovations for Poverty Action.

Homepage photo: Kenneth Buker via Flickr.

Aaron Dibner-Dunlap is an Initiative Manager at Innovations for Poverty Action, and Yumna Rathore is a Project Coordinator at the Center for Economic Research in Pakistan (CERP).

- Categories

- Uncategorized