The Impact Sector is Confusing Satisfaction with Impact: Rethinking the Growing Reliance on Perception Surveys

In the evolving landscape of impact investment and social finance, measurement standards have shifted toward perception surveys — i.e., forms that ask beneficiaries to rate their personal experience with the program — to quantify success. There are many reasons for this trend, as more rigorous evaluations are often expensive and time-consuming, which is not always the best use of limited resources in budget-constrained fields like the social impact and global development sectors. In contrast, perception surveys are cost-effective and provide a vital voice to customers and beneficiaries.

Yet crucially, perception surveys usually tell the type of success story that everyone wants to share and hear. Beneficiaries tend to primarily report positive experiences with a program or enterprise, funders see positive social returns, and programmatic teams receive validation for the value of their work.

While investors and social enterprises increasingly rely on these surveys to craft compelling narratives, we have found that they only tell one side of the impact story. It’s not that this data is not relevant to assessing impact, it’s just that it paints an incomplete picture, which needs to be accompanied by additional data and methods that allow us to truly understand if the changes we expect our programs, enterprises and investments to make are actually happening in the communities where we operate.

As a learning organization that documents not only our successes but also our failures, Root Capital has deep expertise in the challenges of assessing impact, based on over 25 years of experience in finance and capacity building. We believe that true learning journeys require us to look deeper and share data that challenges assumptions, even when results fall short of the “perpetual optimism” typically found in impact reports. This article shares an overview of a recent Root Capital study revealing a fundamental attribution error that occurs when relying solely on perception data — one we’ve had to push back against in our own interactions with internal and external stakeholders.

When Perception Masks Low Program Effectiveness

Since 2019, we have conducted a Digital Extension Advisory Service (DEAS) program in Rwanda, and several rigorous impact assessments have found that it was successful in improving knowledge and adoption of agronomic practices among coffee farmers there.

We wanted to determine if this program could be effectively replicated for macadamia farmers in Kenya.

So in 2024, when we expanded the program to this new sector and geography, we measured it with an objective evaluation, which included an embedded randomized controlled trial (RCT), combined with a survey assessing beneficiaries’ perception of the service (aligned with the donor’s request to use a perception metric to assess the success of the program).

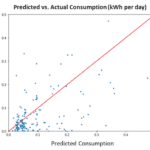

While the perception surveys suggested the service delivered value to recipients, our objective assessment revealed that the program failed to achieve its primary objective: increasing farmers’ knowledge of good agricultural practices. Our evaluation highlighted a sharp contrast between how the DEAS beneficiaries felt about the service and what they actually learned from it. Our initial assessments of farmers’ perceptions suggested a positive outcome, with more farmers categorized as “promoters” than “detractors” of the service. Based solely on this metric, the service appeared successful, as most farmers stated they would recommend it to family or friends.

However, a deeper analysis of learning outcomes told a different story. First, we identified the issue of representativity of the data. While over 6,000 farmers were registered, only 6% recalled receiving training materials and only 9% responded to the final survey. This suggests that the positive feedback might only reflect a small, highly engaged minority rather than the broader registered group.

We also used an RCT that compared a “treated” group of farmers to a “control” group, and a difference-in-differences analysis, both of which found no significant improvement in farmers’ knowledge. These null results underscored a critical lesson: Beneficiary or client goodwill and satisfaction with a program may be an unreliable proxy for program efficacy. Relying on perception data alone would have led us to the false conclusion that the program was ready for scale in the macadamia sector. But since the results of the objective evaluation showed no knowledge gains, we decided not to continue with this attempt to expand the DEAS program.

Reporting these kinds of results may not fit the conventional narrative of the impact space, but it is essential if we want to support the efforts of agricultural small- and medium-enterprises to spur rural resilience.

How to Avoid Confusing Satisfaction With Impact

The key takeaway is that organizations risk strategic failure when they confuse high beneficiary satisfaction with genuine program impact. Satisfaction is a valuable measure of user experience, but we must also assess the core objectives a service was set to achieve. These contradicting findings point to a broader issue in the field: how easily perception-based metrics can mask a program’s ineffectiveness, potentially leading organizations to scale interventions that do not work.

While perception surveys provide valuable information on how services are received, we believe it is important to complement them with objective measures of intended outcomes (i.e., whether the program is meeting its objectives) and cost-effectiveness analysis.

Our experience scaling DEAS offers four actionable principles to help senior leaders navigate the pressure to demonstrate impact while avoiding costly mistakes:

1. Treat Satisfaction Metrics as User Experience Measures, Not Proof of Effectiveness

Perception surveys provide relevant information on how a service is received, but they should not be used as an absolute claim of actual program impact. We must measure whether the changes we expected to see in the world actually materialized.

In our case, as valuable as user experience was for DEAS (e.g., building the relationship with the farmers), the program did not provide them with the knowledge it was designed to convey. To that end, the satisfaction surveys masked the program’s failings in teaching the knowledge farmers needed to improve their productivity and adopt better agricultural practices.

2. Reserve Rigorous Causal Evaluation for Strategic Decision Points and Building an Evidence Base

Based on these downsides of perception surveys, the temptation is to try to have a rigorous causal evaluation for all programs. But applying expensive methods like RCTs to every program is unrealistic in budget-constrained environments. Instead, organizations should reserve these sophisticated impact evaluations (using RCTs or other econometric methods) for high-stakes decisions, such as new market entry or scaling programs into a different context.

Formal impact evaluations may also not be necessary for established programs where a robust body of literature already demonstrates effectiveness. For example, Root Capital has conducted over 10 impact evaluations showing that our ESG due diligence, paired with advisory services and lending, increases the income of affiliated farmers. As a result, we do not see a need to conduct an evaluation for every loan we close; instead, we can assume that under similar conditions, these effects persist. However, we do need to periodically test this assumption to ensure it remains valid. Once a strong evidence base is established to back impact claims, it becomes more appropriate to rely on lighter-touch methods, such as perception surveys.

3. Recognize Data Bias When Estimating Attributable Impact

Digital data gathering approaches, like SMS- or interactive voice response (IVR)-based surveys, often face challenges in creating representative samples of poorer populations. Factors such as the lack of network coverage in rural areas often skew results in favor of wealthier, male farmers who live near urban centers, have higher education levels and own their own devices. As a result, it’s important to also rely on methodological alternatives to address bias. In our study, for example, we estimated the intention-to-treat effect, which is a realistic assessment that accounts for everyone offered the service — including those who did not engage — to ensure an honest estimation of true impact.

4. Recognize Contextual Sensitivity to Prevent Scaling Mistakes

An intervention that proves effective in one region may not work in another. In the case of the DEAS program, understanding why it failed in the macadamia industry, where the relationship between aggregators and farmers differed from the coffee sector, was crucial to preventing scaling errors. By including a rigorous evaluation in the Kenya DEAS program — despite the fact that we had already evaluated the program with apparent success across coffee clients — we were able to test whether the assumption held in another sector.

Different Types of Data Serve Different Purposes

Reporting null results in our evaluations is always challenging. While negative findings can be equally informative, they often face publication bias — both within the organization and among external publications — leading to a skewed understanding of the field. Furthermore, sharing these findings can cause donors to pull funding, or programmatic teams to feel disillusioned with work that shows little return after significant investments of time and resources.

However, despite these concerns, it’s essential to understand and report on negative findings, especially when considering the potential expansion of an initiative. In the case of the DEAS program, we learned that while reaching out to farmers through SMS or IVR methods can be positive for building rapport and loyalty, in their current state, these methods are not effective tools for knowledge transmission in the Kenyan macadamia industry. Our experience with the DEAS evaluation has informed Root Capital’s other programmatic decisions, helping us determine whether to move forward with a program or service.

Our report also serves as a field-building opportunity, as we believe that the evaluation highlights the importance of robustly assessing the impact of services and programs beyond relying solely on perception-based metrics. The contrast between farmers’ positive survey responses and their actual performance on knowledge assessments reinforces the importance of rigorously evaluating service impacts beyond just perceived improvements.

As a learning organization, we believe in sharing stories of success. But we also believe in admitting when projects, businesses or investments fell short, and where impact was not what we envisioned. Doing these evaluations and sharing these learnings reminds us of the importance of pushing back on assumptions — and maybe helps ourselves and others to do things a little bit better.

Juan Taborda Burgos is Director of Impact Monitoring, Evaluation and Learning, Jorge Bouchot is the Senior Manager of Research and Impact Evaluation, and Miranda Hansen is a Senior Analyst in Impact Evaluation at Root Capital.

Photo credit: bo feng

- Categories

- Agriculture, Investing, Social Enterprise